Understanding KL Divergence: A Measure of Information Difference

KL divergence, or Kullback-Leibler divergence, is a fundamental concept in probability theory and information theory. It measures how one probability distribution differs from a second, reference probability distribution. Let’s dive into its meaning, applications, and a step-by-step example to make the concept more tangible.

What is KL Divergence?

KL divergence quantifies the difference between two probability distributions, typically denoted as (true distribution) and (approximated distribution). It answers the question:

How much extra information is needed to represent using ?

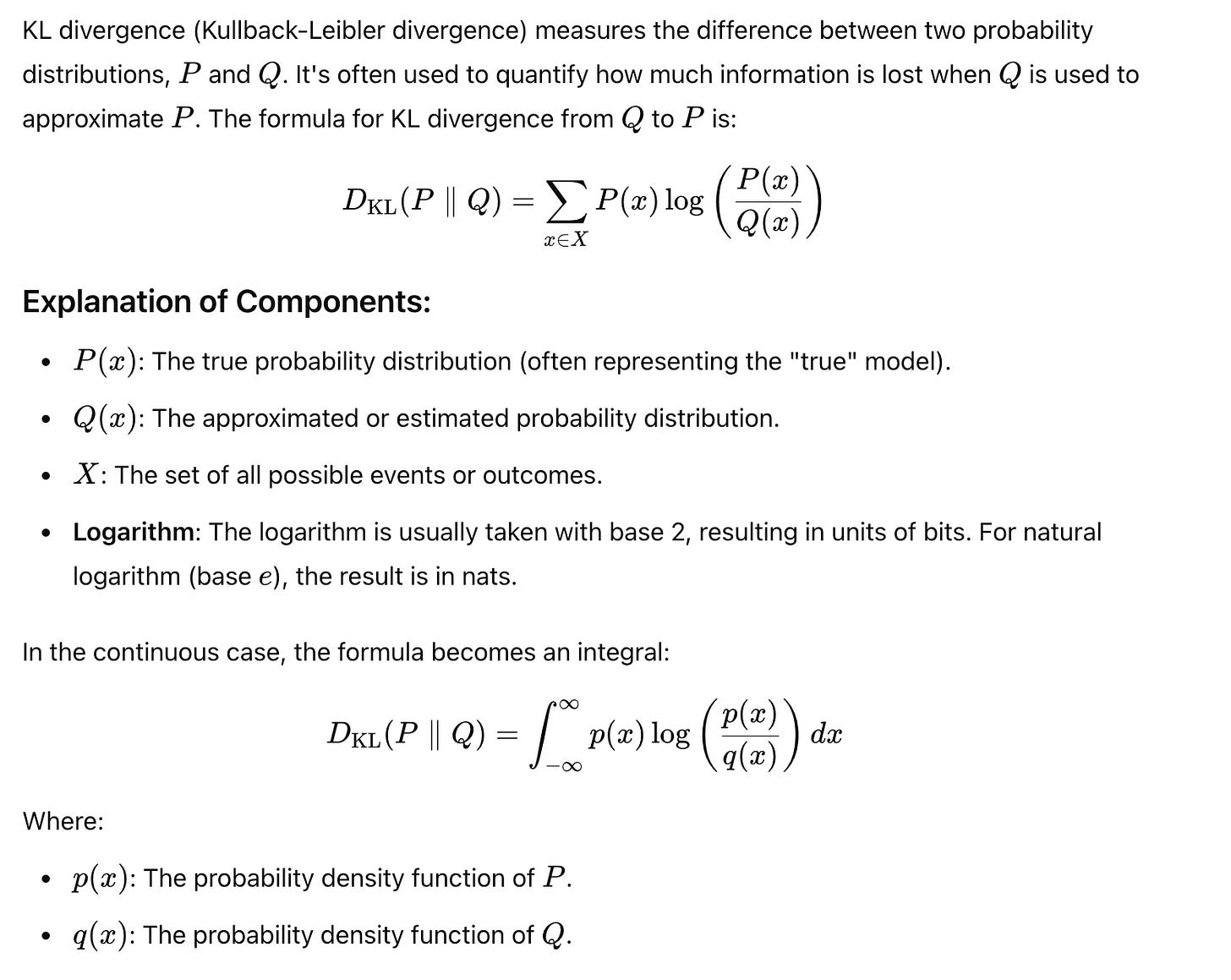

Formally, KL divergence is defined as:

In the continuous case:

Key Properties of KL Divergence

Non-Negativity: . It is zero only if for all .

Asymmetry: . This makes it a divergence, not a distance metric.

Interpretation: A higher value of KL divergence indicates that is a poor approximation of .

Applications of KL Divergence

KL divergence is widely used in machine learning, statistics, and information theory. Common applications include:

Model Evaluation:

Assessing how well a probabilistic model approximates a true distribution.

Regularization in ML Models:

Variational inference, as seen in Variational Autoencoders (VAEs), relies on minimizing KL divergence.

Reinforcement Learning:

Policy optimization often includes KL divergence to balance exploration and exploitation.

Information Compression:

Quantifying the inefficiency of using one probability distribution to encode another.

How is KL Divergence Used in Data Drift Monitoring?

When a machine learning model is deployed, KL divergence can track the difference between the training data distribution (reference) and the live (production) data distribution. This is crucial for maintaining model performance.

Detection of Drift:

If KL divergence increases beyond a certain threshold, it signals that the data has drifted. This could mean the model’s performance is at risk.

Continuous Monitoring:

By regularly computing KL divergence, organizations can detect and respond to data changes early.

Intuition Behind KL Divergence

KL Divergence helps to understand how much one distribution deviates from another:

Small KL Divergence means PPP and QQQ are very similar (low information loss when using QQQ to approximate PPP).

Large KL Divergence indicates a large difference, meaning using QQQ to approximate PPP results in high information loss.

Example: Calculating KL Divergence

Scenario:

Let’s compare two discrete probability distributions:

( P(x) = [0.4, 0.6]

( Q(x) = [0.5, 0.5]

Step 1: Apply the KL Divergence Formula

Step 2: Compute Each Term

Step 3: Simplify Using Logarithms

Step 4: Sum the Results

The KL divergence is small, indicating is a reasonable approximation of .

Advantages of KL Divergence

Sensitivity:

KL divergence is highly sensitive to even minor shifts in distribution, making it useful for data change-sensitive applications.

Early Detection:

It can help detect subtle forms of data drift before they escalate into significant issues.

Disadvantages of KL Divergence

False Alarms:

KL divergence may flag small deviations, such as outliers or noise, as data drift, leading to unnecessary alerts.

Asymmetry:

Since KL divergence is not symmetric (), interpretation can vary depending on the direction of comparison.

Conclusion

KL divergence is a powerful tool for comparing probability distributions. By understanding its properties and applications, you can leverage it to evaluate models, optimize algorithms, and quantify information differences effectively. Whether in machine learning or data science, KL divergence remains a cornerstone of probabilistic reasoning